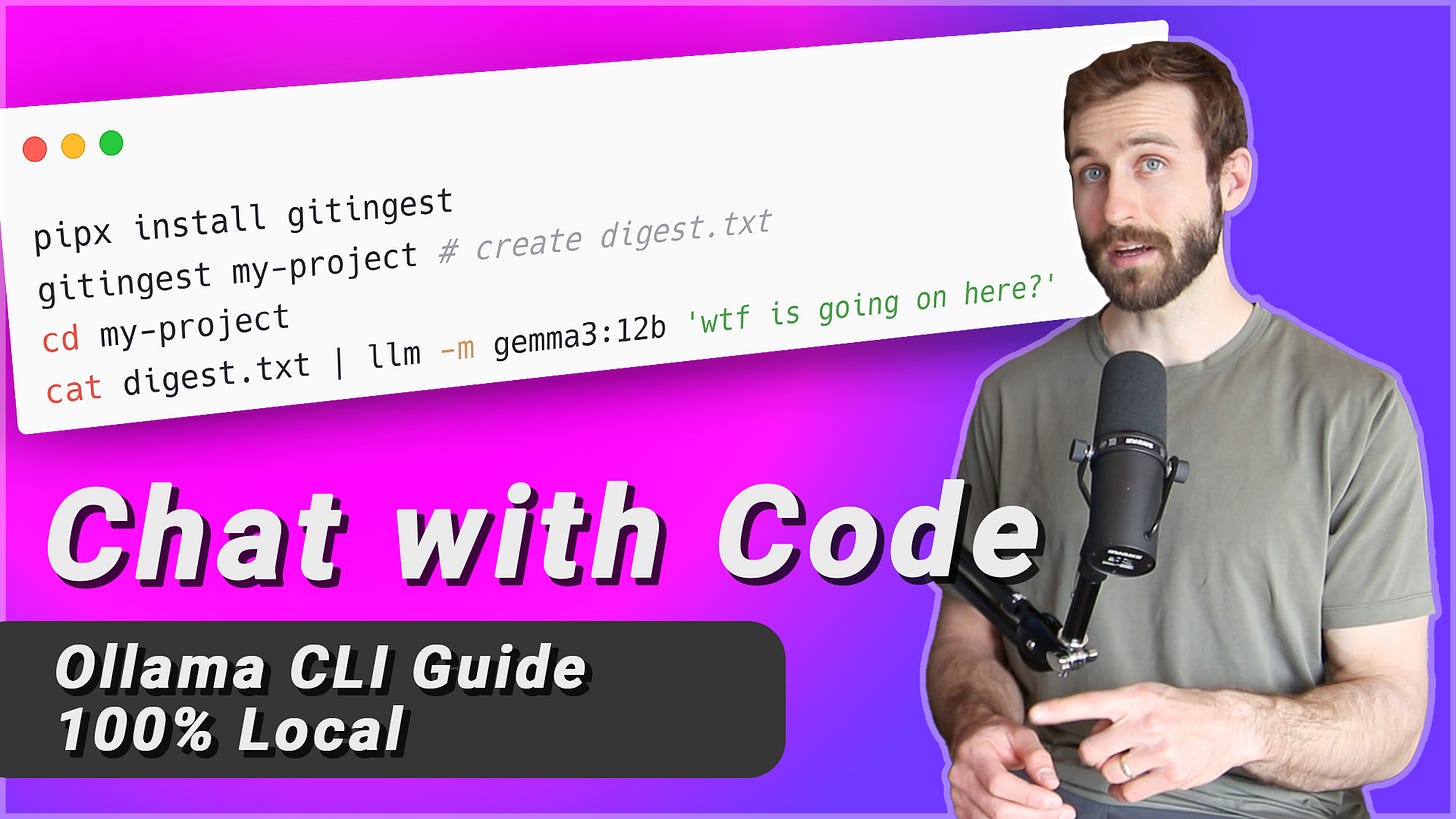

Gitingest — Convert GitHub repos into a clean, LLM-friendly format

This is not sponsored. I made it for two reasons:

Bring your attention to gitingest (which is a neat little tool).

Show you how to chat with codebases using small, local models (like gemma3).

Check out the video on YouTube

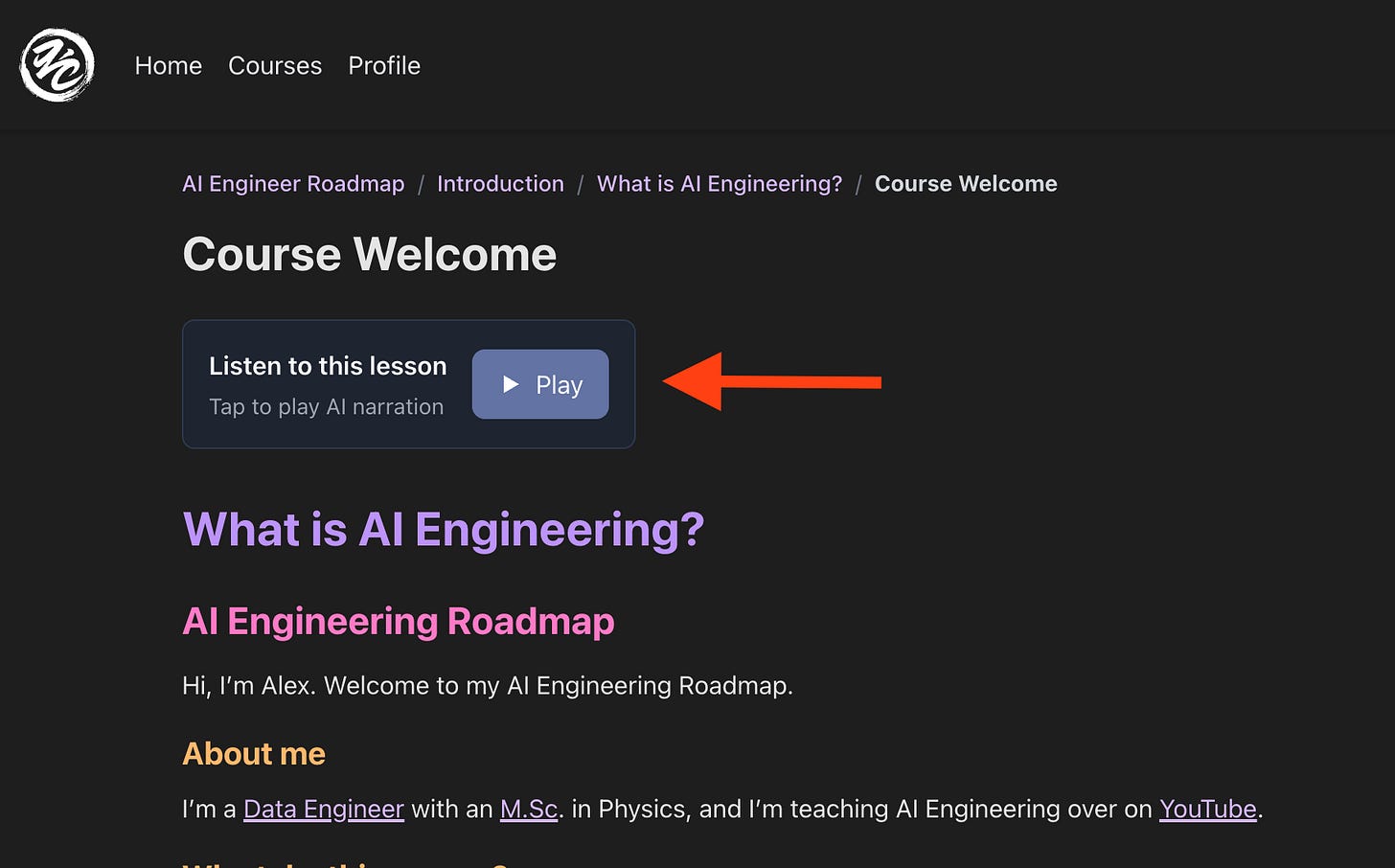

Course Update: Audio

Lesson audio is here! 🎉

I’ve added AI narrations for all 96 lessons.

I even transcribed the math and code snippets.

Have a listen and let me know what you think.

Topics from this week’s video

Gitingest Overview

Tool types: web app, CLI tool, and client library

Converts GitHub repositories into LLM-friendly text files

Specialized utility to help language models understand codebases

Video Purpose

Showcase Gitingest's functionality

Demonstrate integration with local language models

Share use cases and terminal workflows

Web Application Walkthrough

Ingests a Git repo and generates:

Summary (repo name, file count, token count)

Directory tree (Git-like file structure)

Flattened text digest for LLM input

Includes options to filter contents (e.g., only source folder)

Example repo used: neo-brutalism-styled component library

CLI Tool Installation and Setup

Recommended install via

pipxfor isolated environmentsDemonstrated use of

whichand symlink tracingPipx installs to virtual env and links executable

Local ingestion example: Discord bot repo

Ingests current directory

Warns of including sensitive files like credentials

Demonstrates excluding specific files with

-eflag

Using Gitingest Output with Local LLMs

Runs Gemma 3 model locally via

llmCLI toolUses 4B and 12B parameter versions

Prompts include “Explain like I’m 5” style questions

Shows system GPU usage and model performance

Highlights pros and cons:

Free local inference

Occasional infinite loops (e.g., in Gemma 3)

Performance monitored with

nvtop

Improving Workflow

Demonstrates aliasing repetitive commands (

dq)Uses digest + prompt combo for streamlined querying

Real-time demo of model response latency

Remote Repository Example

Analyzes

requestsPython library from GitHubFull ingestion = too many tokens (2.6M)

Solution: include only

/sourcedirectory (-i source)Output digest becomes manageable (~42K tokens)

Advanced Prompting and Iteration

Example use case: extend

requeststo support JS renderingModel suggests using Playwright

Promotes structured prompt chaining via

llm -c(conversation mode)Notes caveats: must let prior completions finish to maintain context

If you believe your product or service can fulfill a true need, it is your moral obligation to sell it.

Zig Ziglar